Featured blog post

Building math proficiency for life

—

Math isn’t just something you use in life—it’s part of life. That’s why we brought together a dream team of math experts and educators to share the latest in innovation and research.

By Amplify Staff | April 8, 2024 Read postExplore more posts.

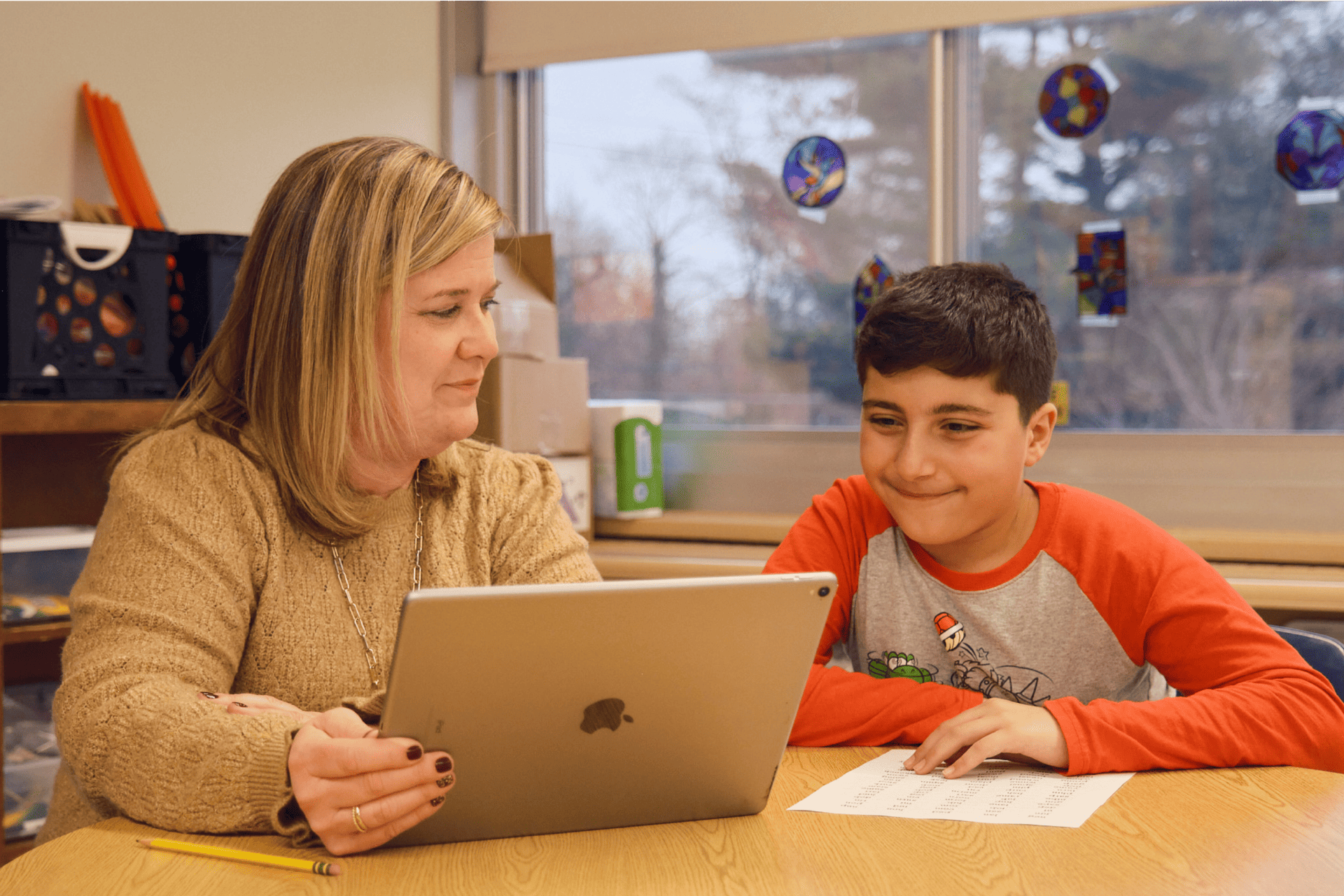

By Elizabeth Sillies, Literacy Coach and Title I Supervisor, Three Rivers Local Schools | April 22, 2024

Suite success: How we support early literacy

Read post

By Amplify Staff | March 18, 2024

The power of data-driven instruction for reading success

Read post

By Amplify Staff | March 4, 2024